This is the last article of the series about “Configuring a Cassandra Cluster in the Cloud” composed of three articles, the first article was about the architecture of the Cassandra Cluster and complete prerequisites, and in the second article we walked through the setup process for installing Cassandra, and finally, in this article, we will be developing all the steps for completing our solution in Azure. I would like to highlight that these particular series include Azure as the cloud platform, however, the first two articles can be considered agnostic in terms of the mechanisms and approaches used for the main configuration of the Cassandra Cluster.

We are going to organize this final article in the following sections where I will teach you every Azure command required to finally make the puzzle.

Contents

Executing Azure Commands

Basically, during the rest of this article, we would be executing a group of Azure instructions for different tasks, so we have to execute each of these commands through Azure CLI or Cloud Shell, in the case of Azure CLI and assuming that you are using OS Windows then you should download of the following link:

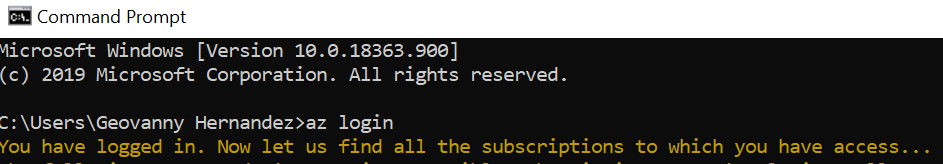

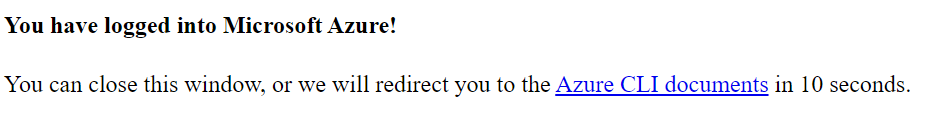

Once you have installed Azure CLI, it is required to open PowerShell or command prompt, later shall log in with the command: az login , automatically this command would redirect to open a browser and login into your Azure account, see the below images:

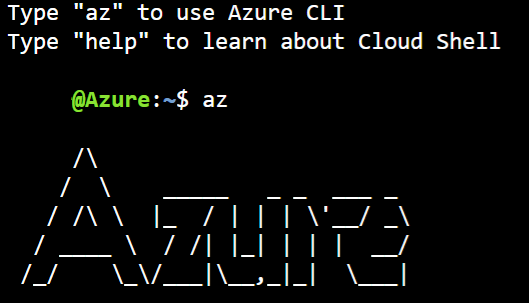

What do you need to do just now? Starting to execute the commands that you require into Azure CLI instance, another simple alternative is to use Cloud Shell inside Azure portal clicking the Cloud Shell and write az for starting using Azure CLI:

Feel free of using the Azure CLI interface which adapts better to your needs.

Generalize the VM

Generalizing an Azure VM is a process which prepares the machine to be used as an image, it is so important to remember remove any personal account information, more details in this links:

https://docs.microsoft.com/es-es/azure/virtual-machines/windows/upload-generalized-managed

Arrived at this point, remember that from here in advance once that you generalize the VM no back road, it means that you will not be able to access to the VM and apply any change, hence you should be careful and start with this step until you are sure about nothing more to do it.

First, you have to stop your VM in case if it is running, later you must deallocate it, remember that in the first article we already created the resource group <<cassandra-group>> and keeping in mind that we will be using it through the rest of configurations associated to Azure. The new name that we are using for the image would be my_image_cassandra-template.

Open an Azure CLI instance and execute the following commands:

az vm deallocate --resource-group cassandra-group --name cassandra-template

az vm generalize --resource-group cassandra-group --name cassandra-template

az image create -g cassandra-group -n my_image_cassandra-template --source cassandra-template --location uksouth

Configuring the Azure Virtual Net (VNet)

As I explained in the first article where I showed a diagram with the proposed architecture of our Cassandra Cluster solution, the VNET is the first layer and where the subnet and nodes will be interacting, I would like to highlight the fact that the location plays a key role, for this reason, we always have to keep it aligned. Said this, we are going to create our VNET executing the following syntax inside Azure CLI (remember to change parameters as –resource-group or –name as in the case that you want to customize the code proposed by me).

# You should change the location according to the requirements of your application, a list of locations can be getting through the following command

# az account list-locations -o table

az network vnet create --resource-group cassandra-group --name cassandra-vnet --location "UK South"

Configuring the Azure Subnet

Once that you have created the VNet, the next step consists of defining the subnet, in simple words, the subnet enables to do a segmentation of your virtual network through one or more subnetworks and setting up a range of address space to each subnet of a virtual network. In this article, we will be setting a simple range equal to 10.0.0.0/24, remember if you are customizing the configuration to update the –vnet-name to your own value.

az network vnet subnet create --address-prefix 10.0.0.0/24 --name cassandra-subnet --resource-group cassandra-group --vnet-name cassandra-vnet

Set up public IPs

The configuration of new public IPs is required because we want to include them inside the new NICS than will be created briefly.

az network public-ip create --resource-group "cassandra-group" --name "cassandra-ip-1" --location "UK South"

az network public-ip create --resource-group "cassandra-group" --name "cassandra-ip-2" --location "UK South"

az network public-ip create --resource-group "cassandra-group" --name "cassandra-ip-3" --location "UK South"

az network public-ip create --resource-group "cassandra-group" --name "cassandra-ip-4" --location "UK South"

Configuring NICS

At this point we have executed the AZ commands to create a VNet, Subnet, so we could continue with the configuration of NIC(S) the stand for Network Interface, this component gives us the interconnection between a VM and a Virtual Network, is mandatory that every VM has at least one NIC, however is perfectly feasible to have multiple NICS associated to one VM, therefore this VM would be able to communicate through different subnets, obviously in our example it will not be required at all.

Let me configure a group of three NICS, one per each node of the Cassandra Cluster, the below instructions will do:

az network nic create --resource-group "cassandra-group" --name "cassandra-nic-1" --vnet-name "cassandra-vnet" --subnet "cassandra-subnet" --public-ip-address "cassandra-ip-1" --private-ip-address "10.0.0.4" --location "UK South"

az network nic create --resource-group "cassandra-group" --name "cassandra-nic-2" --vnet-name "cassandra-vnet" --subnet "cassandra-subnet" --public-ip-address "cassandra-ip-1" --private-ip-address "10.0.0.6" --location "UK South"

az network nic create --resource-group "cassandra-group" --name "cassandra-nic-3" --vnet-name "cassandra-vnet" --subnet "cassandra-subnet" --public-ip-address "cassandra-ip-1" --private-ip-address "10.0.0.8" --location "UK South"

Once that you have created the previous NICS and you could verify it through the next command:

az network nic list --resource-group "cassandra-group" --output json | grep "cassandra-nic"

In these articles can you find more information about NICS configuration:

https://docs.microsoft.com/en-us/azure/virtual-network/virtual-network-network-interface-vm

Deploying new Nodes

It is time to start creating every new node for our Cassandra Cluster, taking into account that we have created the image, VNet, Subnet, and NICS, only need to combine all these components, every new node will be named cass01,cass02, and cass03, I am adding a placeholder for the VM name and for the password and using a generic username, you could perfectly change it.

az vm create --resource-group cassandra-group --name cass01 --image my_image_cassandra-template --admin-username azureuser --admin-password {Password_Here} --location uksouth --nics cassandra-nic-1

az vm create --resource-group cassandra-group --name cass02 --image my_image_cassandra-template --admin-username azureuser --admin-password {Password_Here} --location uksouth --nics cassandra-nic-2

az vm create --resource-group cassandra-group --name cass03 --image my_image_cassandra-template --admin-username azureuser --admin-password {Password_Here}--location uksouth --nics cassandra-nic-3

The output should look like this Azure portal image

Updating the private IP per Node

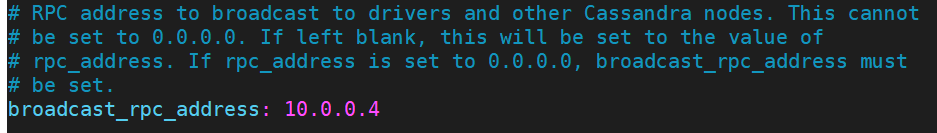

Currently, we have all the nodes almost ready, however, is still pending to update the private IP inside the cassandra.yaml file, according to the NICs configured before it has to update in sync with this value, I mean, for the node cass01 the private IP must be 10.0.0.4, cass02 is 10.0.0.6 and cass03 is 10.0.0.8, I am going to show you an example for the node cass01, so you should edit the file with the following command inside the cass01 node.

vi $CASSANDRA_HOME/conf/cassandra.yaml

Here we must update the broadcast_rpc_ip and assign the value mentioned above

Note: Remember to update the broadcast property in every node.

Running our new Cassandra Cluster

Now that we have configured every node and updated the cassandra.yaml , we should start all the nodes that are part of the new Cassandra Cluster, in general the order does not matter, however, for the node being able to join to the cluster we must initialize always the seed nodes first, so we are going to start with node cass01 and cass02, the process is so easy, in Azure portal we mark all the VM and click start button and connect to every node and start the Cassandra Service as the images show you.

cassandra -R

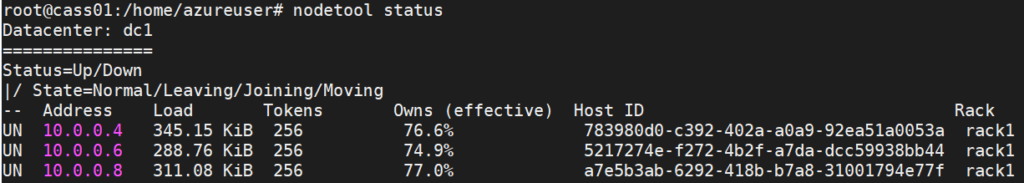

After starting Cassandra in every node involves, we can finally check all the nodes that are part of our new Cassandra Cluster using a nodetool status

You should start to play with your new Cassandra Cluster, my simple advice is to start creating a new keyspace and test table for checking how the data is distributed through the nodes of your cluster. I encourage you to use the Cassandra Academy courses for learning more about this great NoSQL Database technology, in the future I will be using this Cluster to continue exploring and explain to you more interesting features of Cassandra.

Summary

Finally, we have completed all the steps required to have a fully functional Cassandra Cluster, you could decide to continue adding more nodes or simply having a small laboratory for you continue learning and enjoying to play and acquiring more knowledge about Cassandra, probably as you can observe, the first two articles of this series have been generic and agnostic in some way, dedicating the last article to go in details related to Azure. In the future I will continue publishing more articles dedicated to Cassandra and might be spread this series for having examples related to AWS platform, any comment or advice, feel free to contact me by email, and happy coding !!!

Hi there to every one, the contents existing at this web page are really

awesome for people knowledge, well, keep up the good work

fellows.

I’ve been browsing online more than 4 hours

today, yet I never found any interesting article like yours.

It is pretty worth enough for me. In my view, if all site owners and

bloggers made good content as you did, the internet will be much more useful than ever before.